[Photo: Lea Suzuki/The San Francisco Chronicle via Getty Images]

What Dilbert’s Creator Taught Me About Speaking Up

When I heard the news that Scott Adams had died on January 13, 2026, my mind went immediately to a filing cabinet. I have a folder somewhere, probably stuffed behind some old INCOSE conference programs in my home office, where I used to collect Dilbert cartoons for use in SE training. Not the most rigorous filing system, I’ll admit, but a useful one. Over the years, I would pull a strip or two into a slide deck whenever I needed to surface a conversation that a room full of engineers would otherwise find too uncomfortable to start on their own.

The thing about Dilbert was that it gave people cover. You could laugh at the cartoon and then, while everyone was still chuckling, slide in the question: “So... does that sound familiar to anyone?” Suddenly, the room would open up in ways it simply wouldn’t have if you’d opened directly with “let’s talk about why management keeps ignoring your technical concerns.” Humor was the solvent. Adams understood that intuitively, which is probably why the strip ran for nearly four decades.

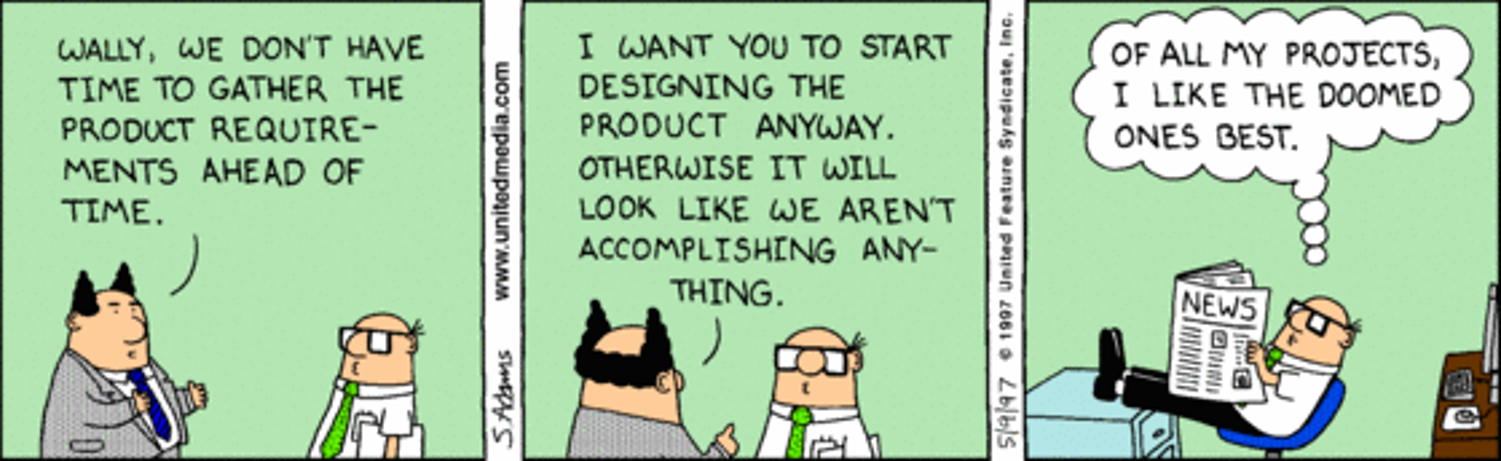

The INCOSE community understood it too, at least for a while. The August 2007 INCOSE SE Handbook v3.1 included a Dilbert cartoon as Figure 7-1, a strip that illustrated with brutal economy how doomed a project becomes when design proceeds without understanding requirements [4]. Finding that in an official INCOSE publication always made me smile, not just because the strip was funny, but because it meant someone on the editorial team understood that an uncomfortable truth lands harder when it arrives with a punchline. Great stuff. I always considered it one of the more honest moments in SE’s official literature.

Adams made his career out of exactly that kind of honesty, and it’s worth being clear about what kind of honesty that was. Dilbert was never simply about bad bosses or lazy coworkers. At its core the strip was a systems critique, and a fairly sophisticated one. Adams drew organizations the way systems engineers are trained to analyze them: as collections of incentive structures, feedback loops, and decision authorities, most of which were badly aligned with the stated objectives of the people supposedly running them. The “pointy-haired” boss is a convenient cartoon villain, but he’s also something more specific, a decision-maker structurally insulated from the technical consequences of his own decisions, which is a failure mode that systems engineers encounter in real programs all the time [3]. Engineers recognized Dilbert not because the character was heroic but because he was constrained by a system behaving exactly as designed.

Adams’ books pushed this analysis into territory that Systems Engineering doesn’t always talk about directly. Works like How to Fail at Almost Everything and Still Win Big and Loserthink: How Untrained Brains Are Ruining America returned repeatedly to themes of persuasion, incentive design, and the gap between technical correctness and organizational change [5]. These resonated with engineers who had discovered, often the hard way, that being right about a technical risk is not the same as successfully communicating it. Adams was making a point that the INCOSE SE Handbook makes in its own more measured way: large complex systems change when information is framed in ways that decision-makers can actually absorb and act on. The friction isn’t always in the facts. It’s often in the transmission.

His later career is where things get genuinely complicated, and where the SE framing becomes more interesting and also more demanding. Over the last decade, Adams turned increasingly toward questioning dominant institutional narratives. He publicly predicted Donald Trump’s electoral success at a time when most commentators dismissed the possibility outright [6]. He was an outspoken and consistent critic of the COVID-19 pandemic response, framing his objections not in partisan terms but in the language of persuasion, second-order effects, and the long-term costs of coercive compliance [7]. He used Dilbert to satirize ESG programs and corporate diversity initiatives, arguing they were performative responses to social pressure rather than genuine risk-informed management, a line of criticism that contributed to the strip being dropped by hundreds of newspapers and his distributor severing ties [8, 10].

And then, in February 2023, he made comments about a Rasmussen poll that he described as hyperbole and critics described as something considerably worse [9]. The professional consequences were swift and severe. Platforms gone. Decades of built reputation, largely gone.

I am not going to sort out for you here whether Adams was right or wrong in his various positions, partly because that would require more columns than this one, and partly because it’s not actually the most interesting question from a systems engineering standpoint. The more interesting question is what the INCOSE SE Handbook says about the ethical obligations of systems engineers in exactly these circumstances.

It says quite a lot, as it turns out. The Handbook is clear that ethical practice requires engineers to recognize hazards and risks and communicate those concerns clearly and promptly, even when doing so is inconvenient or unpopular [11]. This obligation is not narrowly technical. It extends to organizational, social, and policy-level systems when those systems have the potential to cause harm. The Risk Management and Quality Management processes both reinforce this expectation: risks must be identified, escalated, and examined rather than suppressed, and quality processes depend on the transparent reporting of nonconformances, not on punishing the people who surface them [12, 13]. When challenges are silenced rather than examined, the Handbook would diagnose the result not as organizational discipline but as a process failure.

Seen through that lens, Adams’ willingness to question accepted narratives over many years lines up more closely with the ethical posture the Handbook describes than most of us would find comfortable to acknowledge. That doesn’t mean his conclusions were correct. It doesn’t mean the manner in which he expressed them was always defensible. It does mean that the pattern itself, a technically-minded person repeatedly raising uncomfortable questions about systems that appeared to be producing bad outcomes, and paying a significant professional price for doing so, is recognizable to anyone who has ever tried to escalate a risk concern into a headwind of organizational resistance.

Dilbert taught us to laugh at broken systems. Adams’ later career is a considerably less comfortable lesson: that broken systems frequently punish the people who point them out, and that knowing this in advance is rarely sufficient protection against it. The Handbook doesn’t promise shelter from consequences. What it establishes is that silence in the face of perceived risk is itself an ethical failure, not a neutral or safe choice.

As a Systems Engineer, are you prepared to question and challenge the very system in which you operate when you believe a risk or danger is being ignored?

Because Scott Adams, whatever else you think of him, was.

Optional Reader Resource

This is a practical checklist to help Systems Engineers when they sense risk, quality, and ethics are drifting out of alignment, yet lack a practical tool to diagnose and articulate those concerns before consequences become unavoidable.

References

CBS News. “Scott Adams, creator of the Dilbert comic strip, dies at 68.” January 13, 2026. https://www.cbsnews.com/news/scott-adams-dies-age-68-dilbert-comic-strip/

Bregel, Sarah. “‘Dilbert’ Taught White-Collar Workers How to Talk About Hating Work.” Fast Company, 14 Jan. 2026.

Ennes, Meghan. “How ‘Dilbert’ Practically Wrote Itself.” Harvard Business Review, 18 Oct. 2013.

Systems Engineering Handbook: A Guide for System Life Cycle Processes and Activities. Edited by Cecilia Haskins, version 3.1, INCOSE, 2007. INCOSE-TP-2003-002-03.1. Figure 7-1.

“Scott Adams: Books, Biography, Latest Update.” Amazon. https://www.amazon.com/stores/Scott-Adams/author/B004N47QVK

Weissmueller, Zach. “As Trump Coasts to the Nomination, Remember That the Cartoonist Behind Dilbert Saw It All Coming.” Reason, 7 May 2016.

Adams, Scott. “Episode 1460 Scott Adams: I Admit I Was Wrong About the Pandemic.” YouTube, Real Coffee with Scott Adams, 6 Aug. 2021.

Quinson, Tim. “‘Dilbert’ Becomes the Voice of ESG Opposition.” Bloomberg, 21 Sept. 2022.

Ross, Janell. “The Death of Dilbert and False Claims of White Victimhood.” Time, 1 Mar. 2023.

Helmore, Edward. “Dilbert cartoon dropped by US newspapers over creator’s racist comments.” The Guardian, 26 Feb. 2023.

INCOSE Systems Engineering Handbook: A Guide for System Life Cycle Processes and Activities. Edited by David D. Walden et al., 5th ed., Wiley, 2023. Section 5.1.4: Ethics.

Ibid., Section 2.3.4.4: Risk Management Process.

Ibid., Section 2.3.3.5: Quality Management Process.