Image generated using artificial intelligence for illustrative purposes. The irony is not lost.

About thirty years ago, I took my wife and our six kids to an air show. Like most people there, we were pulled toward the most striking aircraft on the flight line: a stealth fighter. The pilot was standing nearby, clearly comfortable with the attention, and his name was stenciled on the side of the jet in that particular way that said this one is mine. He was used to questions about speed, maneuverability, and mission capability. Those were the questions people came to air shows to ask. As a budding systems engineer at the time, I had started thinking about systems through a life-cycle lens, so I asked something completely different.

“Is this thing hard to maintain?”

He paused, rolled his eyes slightly, and said, “You have no idea.”

That response stayed with me. The aircraft clearly met its operational objectives, but only by accepting extraordinary sustainment complexity. Performance had been optimized, and supportability paid the price. That was not necessarily a systems engineering failure. It was a deliberate trade-off, made with eyes open. But the lesson was clear enough: no matter how advanced a system becomes, it does not escape the life cycle. It simply shifts where the cost, risk, and operational friction will eventually appear.

(USAF Photo) U.S. Air Force airmen shut down an F-117 Nighthawk following its farewell flyover ceremony at Wright-Patterson Air Force Base on March 11, 2008, ahead of the aircraft’s retirement later that year.

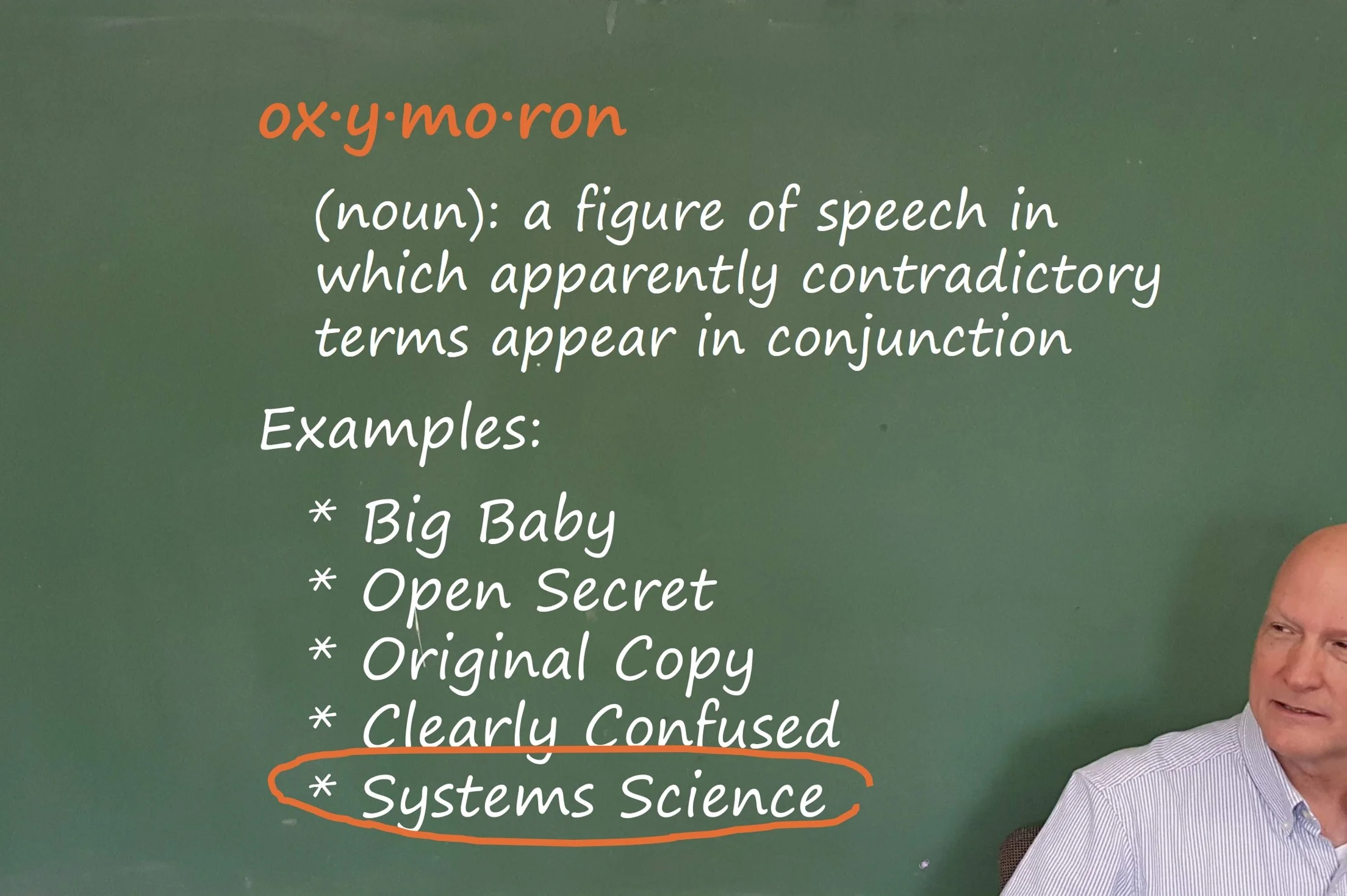

I have been thinking about that pilot lately, because discussions of artificial intelligence increasingly invoke the concept of the Singularity. The term was popularized by Ray Kurzweil, who described it as a period in which technological change becomes so rapid and profound that human life is irreversibly transformed [1]. It is worth noting that the Singularity is not a universally accepted framework within AI research. Many practitioners treat it as speculative or overly metaphorical [2]. But it has become influential enough in public discourse and policy conversations that it deserves examination through a systems engineering lens rather than just a philosophical one.

What strikes me about Singularity narratives is what they tend to emphasize and what they quietly leave out. The emphasis is almost entirely on capability and acceleration: systems that improve themselves autonomously, scale with minimal marginal cost, recover from faults without human intervention, and ultimately reduce or eliminate human cognitive limitations. Human-machine merging, including concepts like mind uploading, is sometimes presented as a logical endpoint of this progression [3, 4]. The writing is vivid and the ambition is genuinely extraordinary. What is largely absent is any serious reckoning with the back half of the life cycle. Once a system is deployed, somebody still has to keep it running, manage how it evolves, and decide when and how to shut it down. Singularity narratives tend to assume those problems will solve themselves. They do not.

Systems engineers are trained to ask exactly those questions, and they are uncomfortable precisely because they are unavoidable. How will this system be maintained over time? Who provides that support, under what constraints, and at what cost? How does the system fail, degrade, or drift from its intended configuration? What does retirement, decommissioning, or disposal actually entail? These questions are challenging enough when applied to conventional software-intensive systems. When you can no longer draw a clean line between what the system does and what the system is, maintenance and disposal are not administrative concerns. They are ethical ones.

If cognition is augmented, migrated, or replicated, what exactly is being supported? A biological substrate, a digital substrate, or some evolving combination of both? If multiple instantiations exist, which one is authoritative? If state and memory are backed up, who governs their retention, restoration, or deletion? Disposal in this context is not merely a technical concern. It is ethical, legal, and societal [5, 6]. Yet Singularity narratives tend to treat these issues as secondary, assuming they will be resolved by future innovation or emergent social norms. History suggests otherwise.

The stealth fighter pilot’s response illustrates a broader principle that scales uncomfortably well. Advanced capability does not eliminate sustainment burden. It concentrates and amplifies it. Stealth platforms required specialized materials, controlled environments, intensive inspection regimes, and extraordinary maintenance hours per flight hour [7]. Over time, sustainment dominated life-cycle cost and operational availability. The aircraft succeeded operationally, but only through continuous, resource-intensive support that the people dazzled by the performance numbers at air shows rarely thought to ask about.

Now scale that lesson to AI-enabled systems that operate continuously rather than episodically, adapt autonomously rather than through managed upgrades, interact with other agents rather than static interfaces, and accumulate state and learned behavior over time. In such systems, support becomes a first-order design driver rather than an afterthought. Configuration management is no longer merely administrative. It is existential [5]. A long-lived, high-consequence system that cannot be safely supported or responsibly decommissioned is an incomplete system, regardless of how impressive its performance may be during the demonstration.

Viewed through a systems engineering lens, the Singularity looks less like a destination than a stress test for everything we have not figured out yet. It tests whether we can maintain control and accountability as systems grow more complex than the organizations governing them, whether we can define explicit end-of-life criteria for systems whose functionality and identity may change over time, and whether we can govern systems that evolve faster than the regulatory and organizational structures built to manage them. A system passes this test not by exceeding human capability, but by remaining controllable, supportable, and terminable under defined conditions.

Ignoring support and disposal does not make these problems disappear. It defers them until they are more expensive, more hazardous, and considerably more difficult to reverse.

That moment at the air show was a reminder that good engineering is not dazzled by performance alone. It is grounded in realism and accountability across the full life cycle. As discussions of AI acceleration and human-machine integration continue, systems engineers have a specific and valuable role to play. Not as futurists or alarmists, but as practitioners trained to ask the questions that optimists prefer to leave for later.

The question I asked that pilot at the air show still stands.

Who will maintain this, at what cost, and what happens when its time is over?

Optional Reader Resource

The Support and Disposal Readiness Checklist is a practitioner-focused tool aligned with the structure and intent of the INCOSE Systems Engineering Handbook v5. It is designed to help engineers, technical leads, and program managers assess whether sustainment and retirement considerations have been deliberately integrated into system architecture, requirements, planning, verification, and governance activities.

References

Kurzweil, Ray. The Singularity Is Near: When Humans Transcend Biology. Viking, 2005.

Russell, Stuart. Human Compatible: Artificial Intelligence and the Problem of Control. Viking, 2019.

Kurzweil, Ray. The Singularity Is Nearer: When We Merge with AI. Viking, 2024.

Martin, Paul. “When Models and Metaphors Are Dangerous.” SE Scholar, 2012. https://se-scholar.com/se-blog/2012/03/when-models-and-metaphors-are-dangerous.html

International Council on Systems Engineering (INCOSE). Systems Engineering Handbook: A Guide for System Life Cycle Processes and Activities. 5th ed., Wiley, 2023. https://www.incose.org/resources-publications/technical-publications/se-handbook/

Floridi, Luciano. The Ethics of Artificial Intelligence: Principles, Challenges, and Opportunities. Oxford University Press, 2019.

U.S. Government Accountability Office. F-35 Joint Strike Fighter: Development Is Nearly Complete, but Deficiencies Found in Testing Need to Be Resolved. GAO-18-321, Apr. 2018. https://www.gao.gov/products/gao-18-321