I use Boeing in my classes all the time.

Not because they're perfect — no organization is — but because they make a clean, intuitive example of something the INCOSE SE Handbook calls the Supply Process. [1] When I'm explaining to students why, as Systems Engineers, we need to worry about the Agreement Process, I ask them to imagine they're FedEx. FedEx needs a new plane. Can FedEx build a plane? Of course not. So they go find someone who can. They go to Boeing.

It's a simple example, but it captures something important: the supplier isn't just a vendor. They're the organization you trust to build the thing you cannot. The acquirer brings the requirements, the mission need, and the operational concept. The supplier brings the expertise, the production capability, and most critically, the quality assurance that makes the whole arrangement worth anything.

A few years ago, I was at an engineering conference and ran into some Boeing representatives. I just had to ask them, "Do you actually make planes for FedEx?" They said yes, and now my little classroom thought experiment had more gravitas.

I bring this up because reading about Boeing's recent troubles has put me in an awkward spot from a teaching perspective. The company I've been holding up as a model of what a capable supplier looks like has spent the last several years making headlines for exactly the wrong reasons. And that's worth thinking about from a Systems Engineering perspective.

What Actually Happened

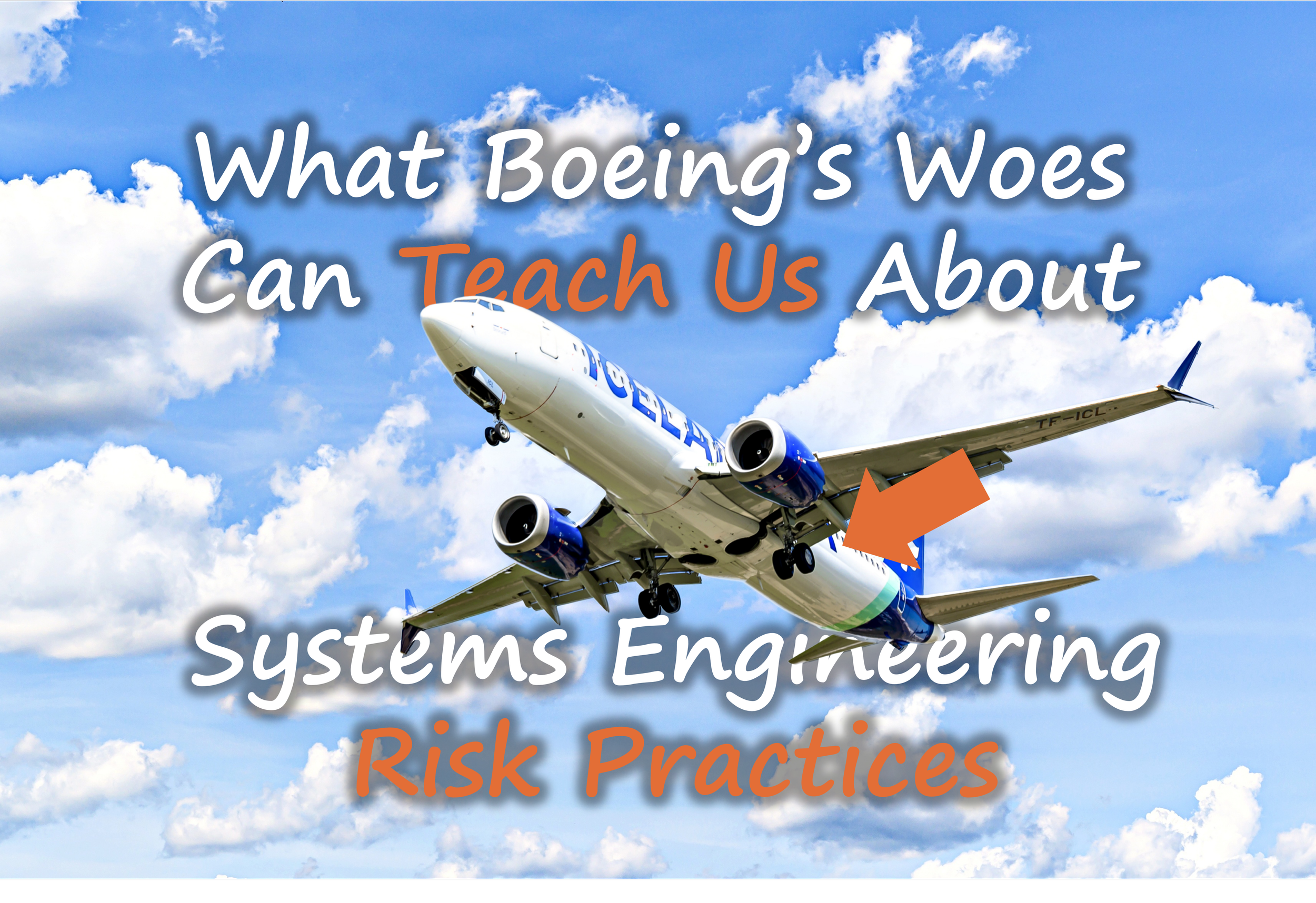

In January 2024, a Boeing 737-9 MAX suffered a mid-cabin door plug blowout shortly after takeoff, forcing an emergency landing and leading to the grounding of aircraft across multiple airlines. [2] This followed years of scrutiny stemming from the 737 MAX crashes that killed 346 people, and ongoing concerns about manufacturing quality on the 787 Dreamliner line. [3]

The investigations that followed painted a troubling picture, not of a single catastrophic design error, but of a gradual erosion of the practices that prevent small problems from becoming large ones. Internal audits had flagged issues. Employees had raised concerns. Whistleblowers had spoken. [4] And yet the risks kept accumulating, quietly, until they didn't.

This is what makes Boeing interesting to a systems engineer. It isn't a story about a part that failed. It's a story about a risk management culture that stopped working.

The Acquirer's Dilemma: Trusting What You Cannot See

Here's where Boeing's situation gets philosophically uncomfortable for a systems engineer, and why it connects back to my FedEx classroom example in a way I didn't originally intend.

The INCOSE Handbook is quite direct about what the Acquisition Process demands of an acquirer. It isn't enough to write a good contract and wait for delivery. The Handbook states that the role of the acquirer demands familiarity with the Technical, Technical Management, and Organizational Project-Enabling Processes, because it is through those very processes that the supplier executes the agreement. [5] In plain English: if you're FedEx and you hire Boeing to build your plane, you need to understand how Boeing manages risk, quality, and configuration well enough to know whether those processes are actually working.

This puts the acquirer in a genuinely difficult spot. You went to Boeing precisely because you can't build the plane yourself. And yet the Handbook tells you that you need to understand, at least at a process level, how Boeing does what it does. Not to do it for them, but to recognize when something is going wrong.

We now know that Boeing's Technical Management processes, particularly Risk Management and Quality Management, were under serious stress in the years leading up to the door plug incident. Manufacturing nonconformances were not being consistently elevated. Inspection steps were being skipped or inadequately verified. [4] The internal processes that a regulator like the FAA or an airline like Alaska Airlines implicitly trusted were eroding in ways that weren't visible from outside the organization until a door blew out at 16,000 feet.

Where the Risk Management Process Broke Down

What the investigations described is what the safety literature calls normalization of deviance, a process by which known problems are quietly reclassified as acceptable because they haven't yet caused catastrophic outcomes. [6] The signals were still there. The audits, the employee concerns, the defect trends. They just stopped being treated as warnings.

A small manufacturing lapse, unaddressed, can propagate through integration, operations, and certification in ways that aren't obvious when you're looking at any single data point. The INCOSE Handbook is explicit that leading indicators, such as rework trends, training gaps, and supplier defect rates, are essential for detecting emerging risks before they become operational failures. [7] Lagging metrics tell you what already happened. Leading indicators tell you where you're headed.

Risk acceptance decisions that become routine rather than exceptional are a warning sign in themselves. When individual risks are accepted one at a time, each appearing manageable in isolation, they can collectively exceed an organization's actual risk tolerance without any single decision looking obviously wrong. [8] That accumulation is hard to see from inside it.

And then there's the one that doesn't appear on most risk checklists: psychological safety. Reports surrounding Boeing consistently describe tension between engineering judgment and management pressure, particularly around schedule and financial objectives. [9] When engineers don't feel empowered to stop production, elevate concerns, or challenge assumptions, the feedback mechanisms that risk management depends on quietly stop working. A strong risk culture treats bad news as valuable data. A weak one treats it as an obstacle to delivery.

What This Means for Practicing Systems Engineers

I still use the FedEx example in class. The underlying SE principle is sound, and what's changed is that I now spend a little more time on what makes the supplier relationship actually work. The INCOSE Handbook notes that relationship-building and trust between the parties are nonquantifiable qualities that, while not a substitute for good processes, make human interactions agreeable. [1] Boeing's story illustrates that rather bluntly: trust is load-bearing. When it erodes within the supplier organization itself, between engineers and management, no contract language fills that gap.

Large-scale engineering failures rarely stem from a single error. They emerge when small risks accumulate unchecked across technical, organizational, and cultural boundaries, and when the culture has quietly stopped treating those accumulations as something worth escalating. [10]

The question worth asking about your own program isn't whether you have a risk register. It's whether the people closest to the work feel like it's safe to put something on it.

Optional Reader Resource

Download: SE Risk Management Checklist (INCOSE‑Aligned)

A practical checklist to help systems engineers assess whether risk practices are truly integrated across planning, design, suppliers, and operations.

References

INCOSE SEH5, Sec. 2.3.2.2: Supply Process. International Council on Systems Engineering. (2023). Systems Engineering Handbook (5th ed.). Wiley.

Federal Aviation Administration. (2024, January 6). Updates on Boeing 737-9 MAX aircraft. https://www.faa.gov/newsroom/updates-boeing-737-9-max-aircraft

Department of Justice. (2021, January 7). Boeing charged with 737 MAX fraud conspiracy and agrees to pay over $2.5 billion. https://www.justice.gov/archives/opa/pr/boeing-charged-737-max-fraud-conspiracy-and-agrees-pay-over-25-billion

Reuters. (2024, March 4). US FAA hits Boeing 737 MAX production for quality control issues. https://www.reuters.com/business/aerospace-defense/us-faa-says-boeing-737-max-production-audit-found-compliance-issues-2024-03-04/

INCOSE SEH5, Sec. 2.3.2.1: Acquisition Process.

Vaughan, D. (1996). The Challenger launch decision: Risky technology, culture, and deviance at NASA. University of Chicago Press.

INCOSE SEH5, Sec. 2.3.4.4: Risk Management Process.

ISO. (2018). ISO 31000: Risk management — Guidelines. https://www.iso.org/standard/65694.html

Gelles, D., & Kitroeff, N. (2019). Flying blind: The 737 MAX tragedy and the fall of Boeing. Doubleday.

Leveson, N. (2011). Engineering a safer world: Systems thinking applied to safety. MIT Press.