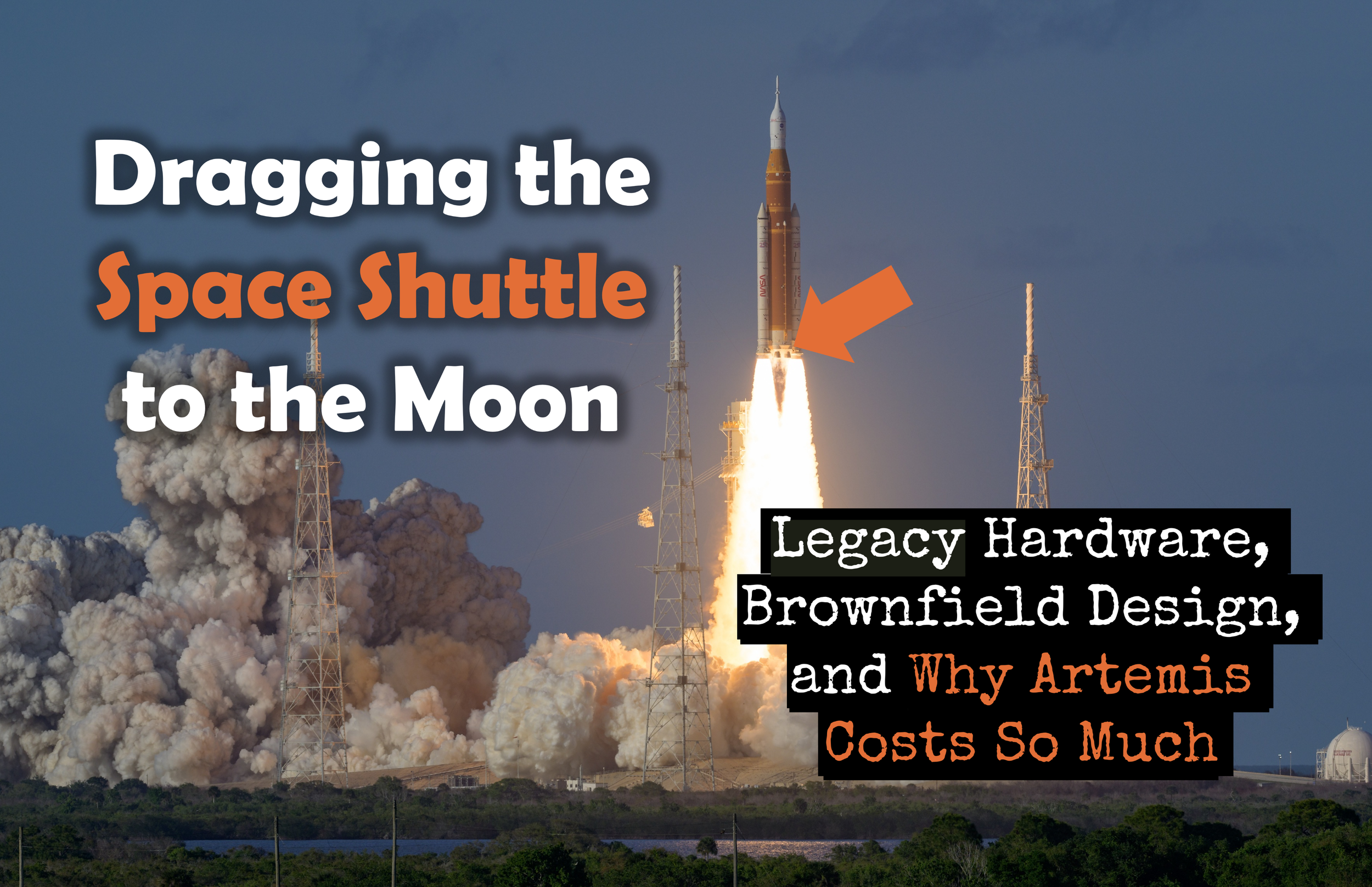

Photo by John Kraus - SpaceX Starship Flight 7 vehicle experiencing an anomaly during ascent. January 16, 2025.

Turning Public Failure into Engineering Progress

Failure has come up on this blog before, more than once, and probably for good reason. Systems engineering is a discipline that takes failure seriously as a source of information rather than just an outcome to be avoided, and I have found over the years that the subject rewards revisiting. Two earlier posts planted flags on this territory that are worth recalling before getting to what SpaceX has been doing in South Texas.

The first, “Failure and the Importance of Lessons Learned,” started from a simple observation: most experienced systems engineers carry at least one significant failure with them, and the real value of that experience depends entirely on whether the lessons were deliberately captured and institutionalized before the program closed and the team dispersed [1]. Failure that gets examined and documented becomes organizational knowledge. Failure that gets quietly set aside just waits to happen again somewhere else, to someone else, probably on a tighter schedule.

The second post, “The Value of Failure,” drew a sharper line between two kinds of failure that look superficially similar but are actually very different animals [2]. A small, controlled failure discovered during evaluation, while the program still has the time and resources to respond, is a completely different event from a large operational failure whose consequences ripple outward through cost, schedule, performance, and public trust long after anyone can do much about the underlying cause. The earlier you find out something is wrong, the more options you have. That is not a complicated idea, but it is one that organizations under schedule pressure have a persistent tendency to forget.

Both posts made the same underlying argument: failure itself is not the objective, but disciplined learning from failure is. Which brings me to Elon Musk and a very large rocket.

By Steve Jurvetson - Flickr, CC BY 2.0, https://commons.wikimedia.org/w/index.php?curid=153992086

Thomas Edison once observed

I have not failed. I've just found 10,000 ways that won't work

Musk operates in something of that spirit, and SpaceX’s Starship test program has given him ample material to work with. The upgraded Starship, carrying a suite of ten mock Starlink satellites, lifted off from the launch pad in South Texas with the full roar of its Raptor engines and streamed nominal telemetry for the first several minutes of flight. Then, about eight minutes in, contact with the upper stage was abruptly lost. The spacecraft broke apart in a flash of orange fire over the Gulf of Mexico, forcing dozens of commercial flights to divert and filling social media with dramatic video of the breakup [3]. To most observers, it looked like a catastrophic failure. To SpaceX engineers, it was a structured test event with data attached.

Edison framed failure as discovery. Modern systems engineering formalizes that intuition. But discovery is only valuable when it feeds disciplined design improvement rather than simply generating impressive footage, and that is where the interesting question actually lives.

The INCOSE SE Handbook is precise about what verification is for. It defines verification as the process of determining whether a system meets its specified requirements, and the critical word in that definition is “determining” [4]. Verification is not a formality conducted after the real engineering work is done. It is the mechanism by which deficiencies, inconsistencies, and unintended interactions are surfaced before a system reaches the people depending on it. Bahill and Henderson put the intent simply: verifying a system means building the system right [5]. When a test article fails during structured testing, it may represent exactly the outcome verification is designed to produce.

The distinction that matters here is between verification failure and operational failure. Operational failure carries stakeholder consequences, reputational damage, and potentially loss of life. Verification failure occurs within a controlled development environment, with defined objectives, instrumentation, and data capture. The purpose is exposure, not performance. Integrated flight testing of a complex launch vehicle stresses the system across propulsion, structures, software, avionics, and thermal domains simultaneously, and no amount of modeling fully substitutes for that. Subsystem interactions, boundary conditions, and real-world loads reveal failure mechanisms that analysis alone may not predict.

This is where reliability engineering connects directly to verification. The Handbook defines reliability as the ability of a system to perform without failure for a specified time in a defined environment [4]. Reliability is not achieved by optimism. It is achieved through structured discovery of failure modes and disciplined incorporation of corrective action. Marques Jones, describing hardware reliability work at SpaceX, explained the job in terms any systems engineer would recognize: take the hardware, figure out why it failed, and then provide a fix so it can be tested again [6]. That is essentially test, analyze, correct, and retest. MIL-HDBK-189C formalizes the same concept: reliability improves when failures are identified, root-caused, corrected, and verified in subsequent builds [7]. Without that feedback loop, repetition is not progress. It is noise.

When a test vehicle is lost, the engineering question is not whether the event was dramatic. The question is whether the failure mechanism was understood, whether assumptions were updated, whether models were revised, and whether the design was modified before the next iteration. Root cause investigation, failure mode analysis, requirements traceability updates, and configuration control convert physical destruction into engineering insight. Evidence of incremental closure of test objectives, consistent corrective action, and measurable subsystem improvement signals genuine reliability growth. Evidence of repeated similar failures with confident press releases signals something else.

The real systems engineering question is quieter than the videos on social media suggest.

Is your organization structurally designed to discover failure before it becomes unacceptable?

Are your tests designed to expose system limits rather than demonstrate compliance?

When something breaks, do you conduct rigorous root cause analysis, or do you move on?

Are design updates traceable to what you actually learned?

Failure during verification can strengthen a system. Failure during operations weakens trust. The difference is disciplined systems engineering. And disciplined systems engineering looks a lot like this: test, fail, revise, repeat.

Optional Reader Resource

Failure during Verification is only valuable if it leads to disciplined design improvement. This checklist provides a structured framework to document test context, identify Root Cause, implement corrective action, and confirm Reliability Growth before the next iteration.

References

Martin, Paul B. “Failure and the Importance of Lessons Learned.” SE Scholar Blog, 12 June 2016. https://se-scholar.com/se-blog/2016/6/12/failure-and-the-importance-of-lessons-learned

Martin, Paul B. “The Value of Failure.” SE Scholar Blog, 3 May 2010. https://se-scholar.com/se-blog/2010/11/value-of-failure.html

Roulette, Joey. “SpaceX’s Starship Explodes in Flight Test, Forcing Airlines to Divert.” Reuters, 17 Jan. 2025. https://www.reuters.com/technology/space/spacex-launches-seventh-starship-mock-satellite-deployment-test-2025-01-16/

Walden, David D., et al., editors. INCOSE Systems Engineering Handbook: A Guide for System Life Cycle Processes and Activities. 5th ed., Wiley, 2023. https://www.incose.org/resources-publications/technical-publications/se-handbook/

Bahill, A. Terry, and Steven J. Henderson. “Requirements Development, Verification, and Validation Exhibited in Famous Failures.” Systems Engineering, vol. 8, no. 1, 2005, pp. 1–14.

Jones, Marques. Interview by Jill Anderson. School of Engineering and Computer Science Magazine, Baylor University, 18 Nov. 2020. https://magazine.ecs.baylor.edu/news/story/2022/marques-jones

United States Department of Defense. MIL-HDBK-189C: Reliability Growth Management. 14 June 2011.