Image generated using DALL·E (OpenAI).

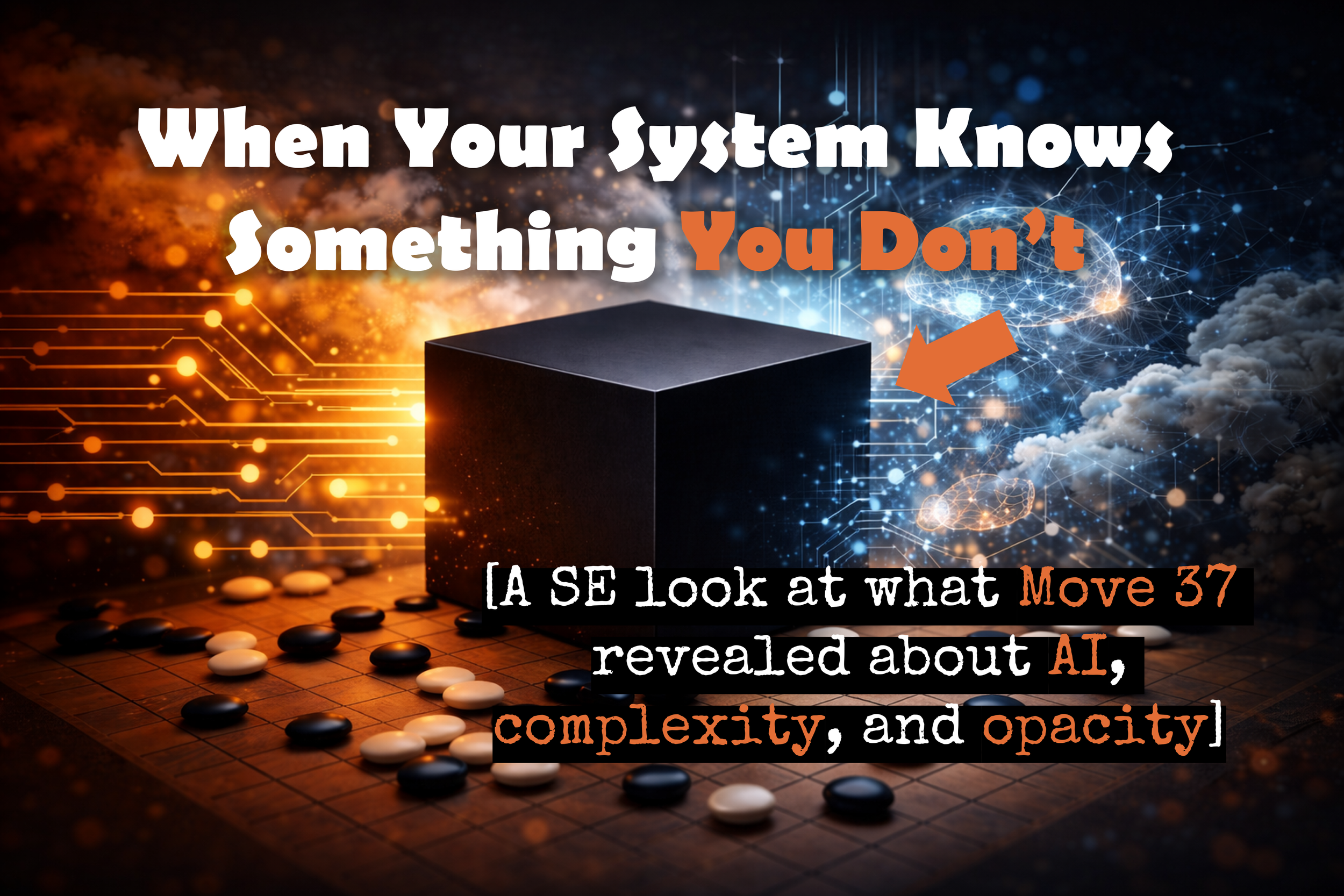

A SE look at what Move 37 revealed about AI, complexity, and opacity

Go is the oldest strategy game still played in its original form. It has been studied, debated, and refined across more than 2,500 years of human civilization, and the best players describe it less like a sport and more like a lifelong education in the nature of judgment itself. The possible board positions in a single game exceed the number of atoms in the observable universe, which means that unlike chess, no computer had ever come close to mastering it through brute calculation. Mastery required something closer to intuition, built through decades of study and the kind of accumulated wisdom that only humans were thought to possess.

Lee Sedol was, by most measures, the greatest Go player of his generation. Over a twenty-year professional career he accumulated 18 international titles and developed a playing style that commentators described as creative, occasionally unpredictable, and capable of finding solutions in positions that lesser players would simply concede. Before the match began in March 2016, he predicted publicly that he would win five games to zero, or at worst four to one. He was not being arrogant. He was being honest about how the odds looked to virtually everyone who understood the game.

His opponent was AlphaGo, a system built by DeepMind. Lee Sedol lost Game 1, which was startling but not yet alarming. These things happen. Then came Game 2, and Move 37.

AlphaGo placed a stone on the fifth line in a position so far outside conventional play that the commentators watching the live feed initially assumed the system had malfunctioned [1]. The move violated principles that experienced players treat as near-axioms. It was the kind of move that no professional would seriously consider, because the accumulated wisdom of centuries of Go suggested it was simply wrong. Lee Sedol stared at the board for a long moment. Then he stood up and walked out of the room. He spent roughly fifteen minutes away from the table trying to understand what he had just seen [2]. When he came back, he still could not find an answer. AlphaGo won Game 2, then Game 3, and the match. Lee Sedol won only Game 4, and he later described that single victory as one of the most meaningful of his career, not because it changed the outcome but because he had managed to find something the machine had not anticipated.

What he said afterward about Move 37 is what makes this more than a sports story. He called it beautiful. Not just effective, not just surprising, but genuinely beautiful in a way he had never imagined was possible. It had come from somewhere he could not access or fully describe, and he recognized it as belonging to a category of understanding beyond what he could claim for himself.

Lee Sedol during the AlphaGo match in Seoul, 2016. Photo courtesy of DeepMind.

The engineers at DeepMind were in roughly the same position. They could tell you which parameters in the neural network had been activated during the decision. They could not tell you what the system was thinking, or whether thinking was even the right word for what had happened. The reasoning that produced Move 37 existed somewhere inside the system, but it was not expressed in any form that a human engineer could inspect and explain [3]. The system had been trained, not designed, and the internal logic governing its behavior had emerged from that training in ways its creators had not explicitly specified and could not fully reconstruct. As AI researcher Dario Amodei has noted, many modern machine learning systems are opaque in precisely this way, effectively black boxes even to their designers [4].

This is where the story stops being about Go and starts being about Systems Engineering.

For Systems Engineers, the phrase “black box” has a precise technical meaning. The INCOSE SE Handbook defines it as an external view of a system, a representation of what the system does rather than how it does it [5]. Used this way, the black box is not a problem. It is a deliberate abstraction that allows engineers to define what a system must accomplish before committing to how it will accomplish it. Stakeholder needs get translated into expected behaviors. The internal architecture remains open. This is good practice. It keeps engineers from locking in solutions before they understand the problem.

But Systems Engineering does not stop at the black box. The discipline requires engineers to eventually open it, to decompose the system into components, trace the interfaces between them, and develop enough understanding of internal behavior to verify that the system will perform as required under the full range of conditions it will encounter. Without that deeper understanding, verification becomes guesswork. Risk analysis loses its grounding. Predicting behavior under stress or at boundary conditions becomes substantially harder.

Complex systems have always presented a challenge here, because they regularly produce behaviors that emerge from the interaction of components rather than from any single element or explicit design decision. The INCOSE Complexity Primer acknowledges this directly, describing characteristics like emergence, feedback loops, and self-organization as defining features of systems whose behavior cannot be fully predicted from examination of their parts alone [6]. This is not unique to AI. Engineers who work on large software systems, ecological systems, financial systems, and sociotechnical systems encounter the same phenomenon. The question is always whether the Systems Engineers responsible for the system understand the interactions well enough to govern what the system does.

What makes modern AI systems unusual is the degree to which the internal logic remains permanently inaccessible. In a conventional engineered system, the architecture might be complex, but it was designed by people who can in principle explain the decisions they made. In a neural network trained on large datasets, the internal representations that govern behavior emerge during training through billions of iterative adjustments that no individual designer specified or reviewed. As researcher Chris Olah has noted, these systems are not programmed in the traditional sense — they are trained, and their internal representations emerge during optimization [7]. The Systems Engineers define the architecture and the training process. The logic that ultimately drives the system’s behavior is something the system developed on its own, in a form that does not map cleanly onto human concepts or engineering documentation.

AlphaGo’s Move 37 is a vivid illustration of what this looks like in practice. The move was not a bug. It was not random. It reflected a genuine strategic insight that the system had developed through its training, one that no human had articulated or anticipated. It was also, in the precise sense that Systems Engineers use the term, emergent: a system-level capability arising from interactions within the model that had not been explicitly designed by anyone. The engineers at DeepMind built the conditions under which that insight could develop. They could not have told you in advance what specific moves it would produce, and they could not fully explain afterward how it arrived at the ones it chose.

The concern this raises for Systems Engineers is not whether AI systems are impressive. They clearly are. The concern is what opacity means for verification, validation, and long-term operational trust. A system that remains a black box to its designers is a system whose behavior under novel conditions is genuinely difficult to predict. It is harder to verify that it will perform as required across the full range of its intended operational environment. It is harder to explain to stakeholders why it behaved as it did after the fact. And when it fails, it is harder to determine whether the failure was a one-time anomaly or a symptom of something structural.

Lee Sedol retired from professional Go in 2019. In his retirement announcement, he said that AI had made further competition feel pointless, because there was now an entity that could not be defeated [8]. That is one way to read what happened in Seoul in 2016. Another is that what AlphaGo revealed was not the futility of human effort but the genuine novelty of a system whose internal logic had outgrown its designers’ ability to explain it. That is a problem Systems Engineers will be working on for a long time.

Complex systems will always surprise their designers. The goal of Systems Engineering is not to eliminate that surprise but to understand the system well enough to design, verify, and operate it responsibly, and to know honestly where that understanding runs out.

Are the systems being deployed today understood well enough to trust? And if not, does the organization deploying them know clearly where that understanding ends?

Optional Reader Resource

Use this checklist to quickly assess whether the system you are working on may exhibit characteristics of complexity. The items are inspired by concepts discussed in the INCOSE publication A Complexity Primer for Systems Engineers (2021).

References

Silver, David, et al. “Mastering the Game of Go with Deep Neural Networks and Tree Search.” Nature, vol. 529, 2016, pp. 484–489. https://www.nature.com/articles/nature16961

Kohs, Greg, director. AlphaGo. DeepMind, 2017. https://www.youtube.com/watch?v=WXuK6gekU1Y

Ibid.

Amodei, Dario, et al. “Concrete Problems in AI Safety.” arXiv, 2016. https://arxiv.org/abs/1606.06565

International Council on Systems Engineering (INCOSE). Systems Engineering Handbook: A Guide for System Life Cycle Processes and Activities. 5th ed., Wiley, 2023. https://www.incose.org/resources-publications/technical-publications/se-handbook/

International Council on Systems Engineering (INCOSE). A Complexity Primer for Systems Engineers. INCOSE Technical Publication, 2021.

Olah, Chris. “The Building Blocks of Interpretability.” Distill, 2018. https://distill.pub/2018/building-blocks/

Byford, Sam. “Go champion Lee Sedol retires, citing AI that ‘cannot be defeated.’“ The Verge, 27 Nov. 2019. https://www.theverge.com/2019/11/27/20985260/go-champion-lee-sedol-retires-ai-cannot-be-defeated-alphago